Module 05 Framework Mastery- Build, Measure, Ship AI

The PM's AI Operating System .Module 05 of 07 . Bridge Issue . Week 10 of 18

Module 05 · Free Preview + Paid Deep Dive

Your AI feature shipped. Week one looked fine. Then something broke. This module is about what you do next , and what you wish you had set up before it happened.

Module 04- You told me exactly what breaks first

“You have an AI feature in production. Something unexpected happens with the output. What is the first thing you do , and what do you wish you had set up before it happened?”

The replies came in fast. 3 patterns showed up in almost every reply.

The instrumentation was not in place so nobody could tell whether the problem was the model or the product.

The team defaulted to “retrain the model” before checking whether users had even noticed.

And the person who owned the outcome had already moved on to the next sprint.

Module 05 is the answer to all three.

Hey folks,

I want to start with something honest. Shipping an AI feature is NOT the hard part.

Most product teams can get something into production. The hard part is the 30 days after you ship , when the real user behavior starts showing up, when the model outputs things you did not expect, and when you have to decide whether what you are seeing is a kill signal or a fix signal.

I have been on both sides of that call. I have killed features I should have fixed. I have fixed features I should have killed. Both mistakes cost the sprint capacity, stakeholder trust, and user goodwill.

The difference between getting it right and getting it wrong almost always comes down to one thing: what you had instrumented before the first user ever touched the feature.

Module 05 is about that. It is the framework I use now to run every AI feature post-ship. And for the first time in this curriculum, part of it is behind the paid tier , because the folks who are at the stage where they are shipping and managing live AI features are exactly who the paid tier is built for.

What is in this 05 issue , how it is split and what is behind paywall

You can no longer be a PM who is good in spite of AI. You have to be a PM who is good because of it. And that starts with owning what happens after the feature ships.

LogRocket PM Report, February 2026

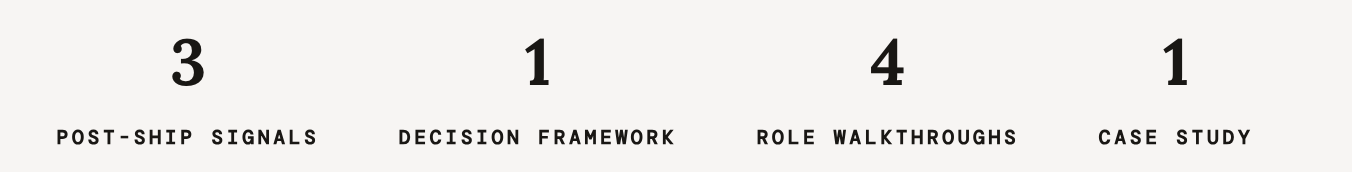

The free section gives you the problem statement and the framework overview. You will understand what breaks post-ship and why most PMs are not set up to catch it. The paid section gives you the full working tools , the 3 signal diagnostics, the kill-or-fix decision framework, the heavy-user margin check, all four role walkthroughs, and the full case study.

The Problem

Week one looks fine. Week four tells the truth.

How most AI feature post-ships actually play out?👇🏽

The feature goes live on a Monday. The engineering team watches the dashboards. The model accuracy looks good. The first few hundred users interact with it. The PM sends a launch note to stakeholders. Week one closes and everyone moves on to the next sprint.

Then week four arrives. Adoption is lower than the launch week suggested. A handful of support tickets reference the AI feature directly. One power user sent a specific note about a wrong output they got in week one. And now the PM is trying to reconstruct what happened from incomplete instrumentation, sprint notes, and a Slack thread nobody archived.

This is not a rare scenario. Based on the most recent Mindset AI research across dozens of product teams, only 39% of organizations report measurable bottom-line impact from their AI features, and the gap is almost never the model. It is the absence of the right observability before launch.

The problem has three layers.

First, most teams instrument model performance but not product performance. They watch accuracy. They do not watch trust recovery, output acceptance rate, or downstream outcome rate.

Second, when something breaks, the default response is to involve the ML [machine learning] team and talk about retraining. That is the right answer maybe 20% of the time. The other 80% is a product design or product scope problem that does not need a new model , it needs a different decision.

Third, nobody owns the outcome 30 days after ship. The team has moved on. The data is sitting in dashboards nobody is watching.

Module 05 is the system that closes all 3 gaps. And the full system , the diagnostics, the decision framework, the margin check, the role walkthroughs , lives in the paid section below.

Curriculum Shift- hands on tools

This is where the curriculum shifts from reading about the problem to having the tools to solve it. Paid subscribers get the full working system , the diagnostics, the decision framework, the margin check, all four role walkthroughs, and the case study.

What you get with a paid subscription

The 3 post-ship signals with full diagnostic questions

The kill-or-fix decision framework with the heavy-user margin check

My way “Role walkthroughs” : New PM, Senior PM, Director, CPO

Full case study with the decision trail

All of Modules 06 and 07 when they drop

Full template and framework vault

![1 The post-ship moment nobody prepares for What actually happens in week one after your AI feature goes live — and why most PMs are not instrumented to see it clearly. Free — below 2 The 3 post-ship signals most PMs misread The patterns that look like model problems but are actually product problems. And the diagnostic question that tells you which one you are dealing with. 🔒 Paid subscribers 3 The kill-or-fix decision framework The four-question sequence I run every time something unexpected happens post-ship. With the heavy-user margin check built in — the one question your CFO [chief financial officer] is already asking. 🔒 Paid subscribers 4 Role walkthroughs — New PM through CPO What this framework looks like in practice at four different levels. Including how to present a kill decision to a stakeholder who funded the feature. 🔒 Paid subscribers 5 Full case study — the feature that should have been killed in week two A Senior PM runs the kill-or-fix framework on a live AI feature, catches the margin problem before it hits the board, and makes the call. Full decision trail included. 🔒 Paid subscribers 1 The post-ship moment nobody prepares for What actually happens in week one after your AI feature goes live — and why most PMs are not instrumented to see it clearly. Free — below 2 The 3 post-ship signals most PMs misread The patterns that look like model problems but are actually product problems. And the diagnostic question that tells you which one you are dealing with. 🔒 Paid subscribers 3 The kill-or-fix decision framework The four-question sequence I run every time something unexpected happens post-ship. With the heavy-user margin check built in — the one question your CFO [chief financial officer] is already asking. 🔒 Paid subscribers 4 Role walkthroughs — New PM through CPO What this framework looks like in practice at four different levels. Including how to present a kill decision to a stakeholder who funded the feature. 🔒 Paid subscribers 5 Full case study — the feature that should have been killed in week two A Senior PM runs the kill-or-fix framework on a live AI feature, catches the margin problem before it hits the board, and makes the call. Full decision trail included. 🔒 Paid subscribers](https://substackcdn.com/image/fetch/$s_!mhEG!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F29ed4c5f-3d78-4206-a266-d38a19fae8d0_1168x1206.png)